💡 Defence in Depth

“Defence in depth”, sometime also called “layering” is a central concept in information security. It relates to the idea that security components should be designed so they provide redundancy in the event one of them was to fail.

As a bit of history, it is believed the concept of defence in depth has been first introduced by the roman army as the Roman empire reached the end of its expansion and entered a more stable period. During the expansion period, Roman territorial borders were heavily guarded and enemy forces, even in small groups, were immediately attacked and repealed. It was a very aggressive strategy which resulted in a quick expansion, as each attack allowed to gain a little more ground into enemy territory, but it required a lot of troops to maintain.

As the empire settled, the approach had to change and became a more defensive approach, requiring less soldiers. The new strategy focused on slowing down attackers long enough to let the army - now regrouped and located slightly further from the border - gather and come to intercept them. To do so, Romans put in place various type of defences one after the other, for example spikes, followed by a trench, followed by a wall. Each defence layer could fairly easily be defeated independently but the skills and tools needed to do so were very different for each of them. So the invaders will first have to send men to remove the spikes, then get planks to cross trenches, then get ladders to pass the walls. Even if they became very efficient at eliminating one of the layers, the others would still be there to slow them down. And by then, the few guards posted around will have time to alert the nearby fort and get reinforcement.

In computing, the concept of defence in depth is very similar. It works on the premise that each layer of defence can be penetrated, but the effort to penetrate them all is so substantive that either the attacker will not pursue, or detection will occur prior all layers are passed.

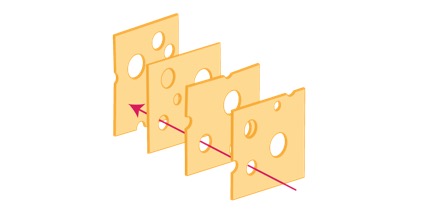

The Swiss cheese model

The defence in depth approach derives from a risk concept known as the cumulative act effect – more often referred as the “Swiss cheese model”.In the Swiss cheese model, security systems are compared to multiple slices of Swiss cheese, stacked side by side. The characteristic holes in each Swiss cheese slice represents a weakness or vulnerability. Because the slices are stacked behind each other ("in series"), the chances of having a hole traversing the stack from one side to the other is greatly reduced. From a risk perspective, it means that the existence of a single point of failure is reduced as even if one layer gets compromised or bypassed, the next one will hopefully catch the threat.

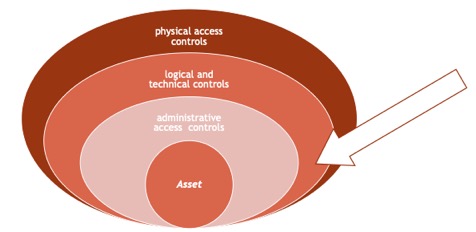

Applied to information security, the defence in depth approach put the asset at the centre of the security design, with various layers of protection applied one after the other around it. The more common pattern consists in at least 3 layers:

- physical access controls, that will physically limit or prevent access to the IT systems (fences, guards, CCTV...)

- logical and technical controls, that will protect systems and resources (encryption, IPS, antivirus...) and

- administrative access controls, that will provide guidance on how to handle systems and data (policies and procedures such as data handling or secure coding practices).

Each of these layers can in turn by divided into even more layers, creating an onion-type design where each security capability works independently from the others. This is an important point: because the layered approach is defined “in series”, each capability must be fully isolated from the other layers so, regardless if any other layer fails, all the other continue to offer optimal protection.

The basic rule of thumb is that:

- each layer must have at multiple separate active protections – for example the physical layer could have a fence and a CCTV – and

- each protection must not work for multiple layers – for example, the same camera must not be used for both CCTV in the physical